Meta has announced a major upgrade to its child protection systems on Facebook and Instagram, deploying new artificial intelligence tools designed to estimate users’ ages based on visual cues extracted from photos and videos shared on its platforms.

The move comes amid growing regulatory and legal pressure on the U.S. tech giant, which has repeatedly been accused of failing to adequately protect underage users online.

The European Commission recently concluded that Meta had breached the European Union’s Digital Services Act (DSA) requirements regarding the protection of children under 13. This adds to a series of legal challenges faced by the company in the United States, where several states have raised concerns about the potential impact of social media platforms on teenagers’ mental health.

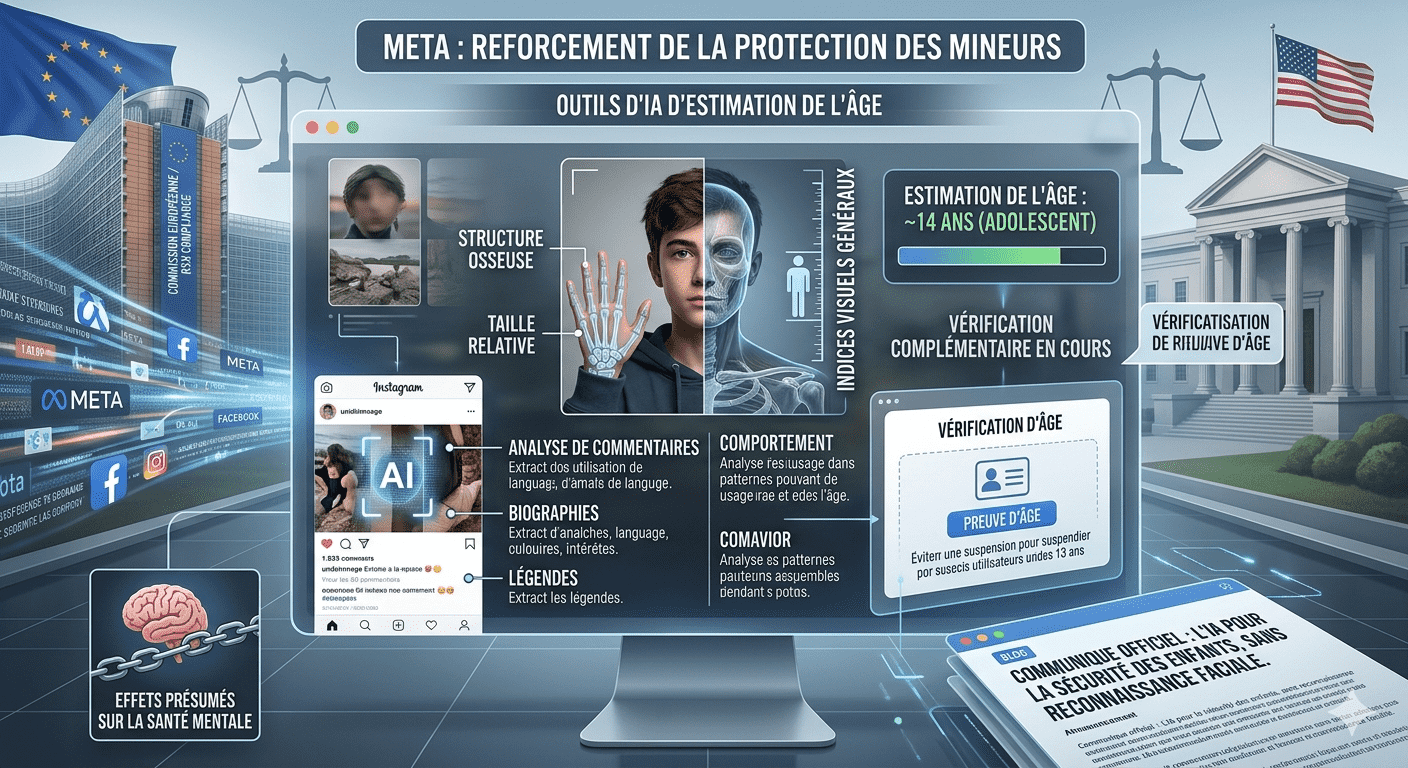

According to Meta, its existing AI systems already analyze textual and behavioral signals such as comments, bios, and captions to identify accounts potentially belonging to minors. The company now says it will expand these capabilities by incorporating general visual cues — including perceived body structure — from user-generated content to help estimate age ranges.

Meta stresses that this technology does not involve facial recognition. The company states that the system does not identify individuals but instead provides approximate age estimations based on aggregated visual signals.

The tech group adds that combining visual, textual, and behavioral analysis should improve the detection of accounts belonging to users under 13. When an account is flagged as potentially belonging to a minor, it may be suspended, and the user will be required to provide proof of age to restore access and avoid deletion.